Understanding automatic differentiation

Computers need to calculate derivatives using algorithms that are rightfully “computer-like” and differ from the common methods used by humans. This technique, known as automatic differentiation, is a general formulation that has been used in a number of domains such as finance, computational fluid dynamics, control systems and engineering design. Even though it finds its use wherever scientific computing is done, we will be studying from the realm of machine learning.

Necessary primer

For a disclaimer, this article is not aimed to teach you neural networks or optimization algorithms but rather how gradients are calculated efficiently in computers and why it makes a difference in the context of neural networks. This section is for those who have no clue what neural networks are or how they are trained. Feel free to skip to the next section.

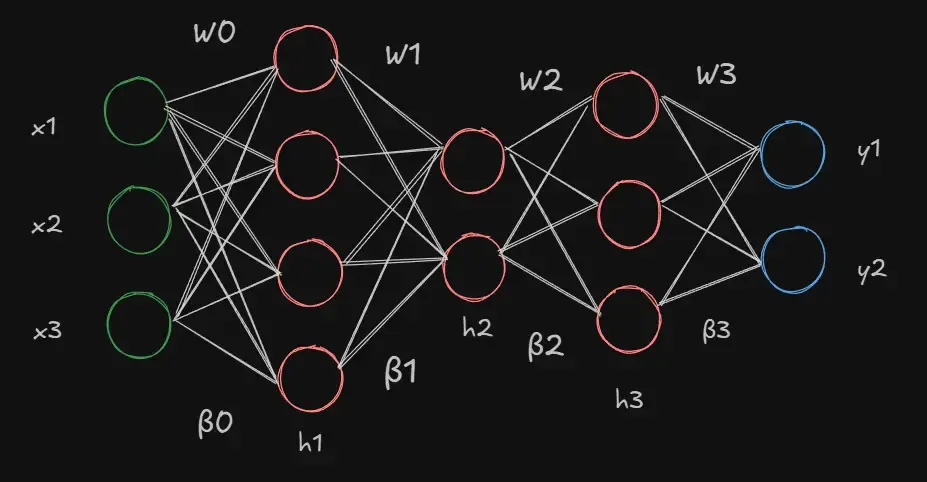

A neural network, in brutally simple terms, is a mathematical model that learns patterns from data and helps us make predictions or useful insights on new, unseen data. We start with describing a kind of neural network known as the multi-layer-perceptron. The network takes in a set of inputs () which it feeds into a number of layers and finally gives us an (or many) output(s) (). Each of these layers contains a number of hidden units (neurons) that each takes in input from the neurons of the previous layer, performs an operation on this input and passes it to the neurons of the next layer. When each neuron of a layer is connected to all neurons in its preceding and succeeding layer, we call it a fully connected network.

We can define the network as a function , where represents the inputs to the network while represents the set of parameters of the model (weights and biases). At every neuron, we carry out three simple operations.

- Multiply the incoming input with the weight specific to that input. ()

- Add a bias to this term. () This result is called the pre-activation.

- We finally take this linear term and feed it into a non-linear function (, sigmoid or ReLU) known as an activation function (). These functions allow the model to capture the non-linearity present in the data.

If there are neurons in the previous layer of a fully connected network, a neuron in our current layer gets inputs and thus weights for each with one bias term for the entire neuron. An individual neuron’s output (activation) would look like this.

Now consider the combined output of all neurons in the first layer. If we have an input vector , neurons, a weight matrix with each row representing the weights of one neuron, a bias vector , then we can represent the output of the layers as

with the final output (after the last layer) having no activation . As you can notice, each layer takes in the output of the previous layer as its input (function composition). This is why the set of weights and biases together are called the parameters or learnable components of the neural network () as changing any one value would invariably change the output of the model.

We can measure whether a model is learning well by matching its predictions against the “actual truth”. The “actual truth” is the real result () corresponding to the input data (). The distance to this is estimated by a metric known as the “Loss”, which is the difference between the actual value and the predicted output of the model . A high loss indicates a large discrepancy between the model’s output and the real value while a loss of near zero means the model’s predictions are very accurate.

The simplest of loss functions is the Mean Squared Error. It is the average of the squared differences between actual values and predicted values.

Our primary objective is to minimize this loss function. The loss function space over parameters (scalar field) can be imagined as a valley with hills, slopes and ravines like a real landscape with several perturbations on the surface of the terrain. We need to choose parameters that take our model’s loss to the lowest point of this terrain (a global minima). There have been several algorithms that attempt to learn or “uncover” these parameters but the most practical and universally used algorithm in today’s era is a technique called Gradient Descent. Starting at any point on the surface (taken care of by proper initialization methods), we iteratively calculate the slope of the loss function or formally the gradient, which is the vector of partial derivatives with respect to each weight and bias on that point. This involves calculating the gradient of the loss representing the loss for the -th sample

for every layer and every training sample.

The gradient vector tells us how much the loss would change if we nudged that specific parameter slightly. It points in the direction of steepest increase in the loss. Since we need to minimize the loss, we update the parameter vector by multiplying the gradient vector with a scalar known as the learning rate () and then subtracting it from our current parameter vector. This is how we “descend” down the slope and keep tweaking our model’s parameters on every iteration to improve results.

With all of this in our mind, we can now focus on what this article is really about. Calculating those gradients!

Finite differences and symbolic differentiation

The definition of a typical derivative of a univariate function that we learn in our calculus classes is as follows.

This equation, known as finite differences, tells us just how fast (the rate) the function changes instantaneously at a certain point. It is equivalent to the slope of the tangent () at that point as the numerator represents the change in the value of the function (perpendicular) and the denominator is the infinitesimal increase in (base).

For the case of gradient descent, we can represent the derivative of the loss function with respect to a parameter () like so.

where is the -th unit vector (vector where the -th element is and the rest ). We include it for maintaining an important mathematical consistency but will ignore this for the remainder of the section.

The primary issue in finite differences comes from the rise of two kinds of errors, namely truncation and rounding, both of which are represented as functions . Because computers cannot really tend to like we formally would in theory, we are forced to assign a tiny but finite value for h and can only approximate the true mathematical derivative by taking the taylor series expansion. The former of the two errors is directly due to the extra terms present in the expansion of .

If we substitute this into , we get

As we are only interested in the first derivative, the terms following contribute to the error. We can mitigate this truncation error by taking the central difference in place of a forward () or backward difference ().

Since half the terms cancel out, the truncation error in this case will be much lesser.

Taking a larger value of h will fail to approximate the derivative well and lead to an increase in the truncation error. If we decrease the value of , the truncation error decreases but in turn, increases the rounding error.

The rounding error arises from the infamous inability of computers to handle floating point arithmetic. As we shrink to an extremely small value, subtracting and , which almost become identical values often results in computer programs (typically handle double-precision floating point numbers but is dependent on compiler and machine specifications) rounding off to zero and a complete loss of information. The smaller the value of , the higher the numerical instability.

The direct and inverse relationship of the truncation error and rounding error respectively with leads us to a trade-off where we must find the optimum value of to get the most out of the derivatives.

Additionally, for a function , the time complexity to compute the gradients is roughly , which is quite inefficient if we take into account that neural networks can contain billions of parameters and would blow up the computation time massively. And all of these inaccuracies exacerbate in the case of higher (2nd or more) derivatives.

To address these problems, we turn to a second approach known as symbolic differentiation. In reality, this isn’t much different from how we handle differentiation algebraically. Consider the functions,

We can differentiate a function using the chain-rule by writing a program

to rearrange terms according to the rules of calculus. But neural networks have hundreds of layers

that result in functions that are composed of hundreds of other functions. While we don’t have to

deal with precision errors, unwrapping all of these compositions using the chain-rule would again, blow up the number of terms and compute required (known as

expression swell). It would also require implementing a caching system to avoid redundant

calculations and increase complexity.

And needless to say, the performance for higher-order derivatives would be terrible.

Software like Wolfram-Alpha uses symbolic differentiation for its operations but applies various simplification functions after each operation recursively. This includes factorization or cancelling terms to reduce expressions to compact “nice” forms. But such measures are mostly heuristical and often slow since they try many strategies and provide no guarantee of finding simple forms, if “simple” is even something well-defined in the first place. Clearly, this is not feasible for machine learning.

This is where we take a step back and start thinking programmatically.

Automatic Differentiation

Consider a function . We can express it as the sum

of three smaller expressions , and .

Such decomposition into intermediate values (called primals) is exactly what

automatic differentiation is about.

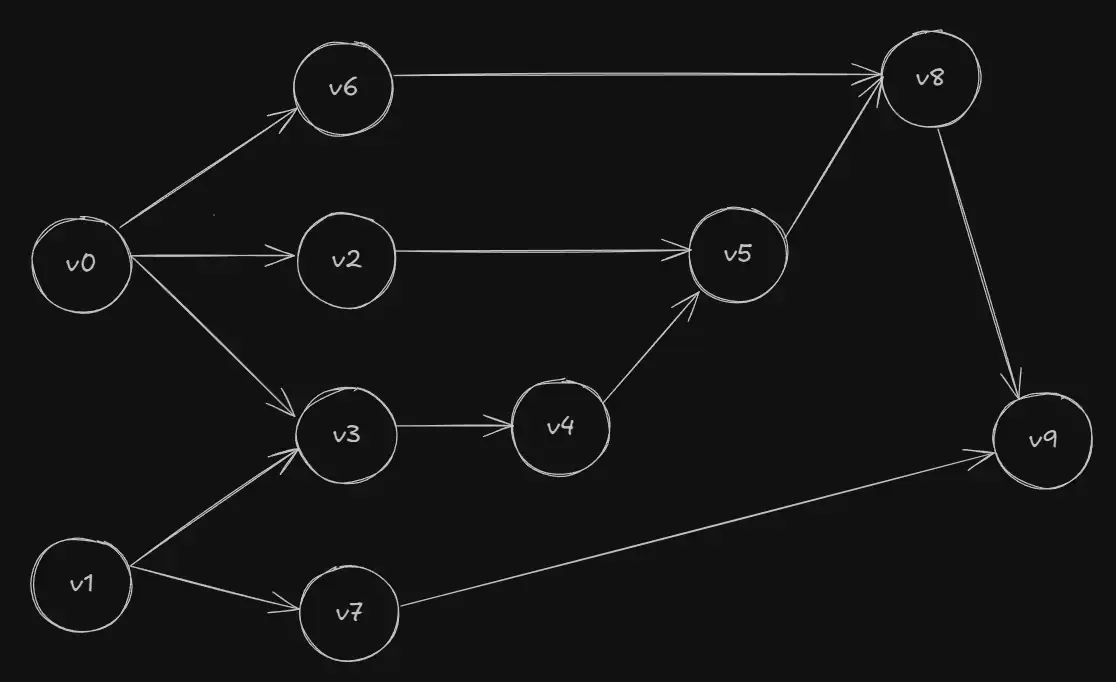

Autodiff constructs a computational graph (Directed Acyclic Graph) of these primals to track how each value depends on the next. The nodes comprise of input, output and all intermediate variables while the edges of the graph maintain the flow or direction of computation.

We can examine this by creating an evaluation trace, which is a record that keeps track of the primals at each primitive operation. It starts by building the expression from its scalar inputs and combining them to form larger expressions using simple binary operators or transcendental functions like and .

For the function , let the value of and be and respectively. Then its evaluation trace would be as follows.

Forward Mode and Dual Numbers

In forward mode, we calculate the primal and its derivative (also called the tangent) at every node in the computational graph. These tangents represent the partial derivative of a primal with respect to a specific input variable (say ). As we move through the graph, we apply the chain rule to propagate these derivatives, ultimately arriving at the derivative of the entire function with respect to the chosen input.

By performing a forward pass on with respect to , we get the following tangent trace.

The dependency properties of DAG assures us that any derivative needed to calculate the current primal’s derivative has already been calculated at a previous node. Thus, the tangent of the final value is equal to the partial derivative of the whole function with respect to at ().

This leads us to an important advantage of AD over symbolic differentiation. We saw through the evaluation trace that AD computes the values dynamically during runtime by traversing the graph. It does not really care how the computation is performed. It only records what values went into each operation, their outputs and the chain of operations. This means that we could write code that involves conditional branching, looping and recursions as long as they involve a sequence of mathematical operations and produce numerical values. Essentially, this makes AD “blind” to control flow logic as it is only concerned with the values that result from the execution of a branch regardless of the path taken. Symbolic differentiation would struggle in such cases as it would start finding a single expression to handle all branching cases and would inevitably introduce non-differentiable points. This makes AD incredibly powerful for a vast number of applications.

The idea of forward mode AD is reflected in the analytical method called Dual Numbers. A dual number is defined as where , with and being the real and dual parts respectively. The coefficient of can also be denoted as as it carries the derivative. Assuming the is necessary because when we plug a dual number into the taylor series, all the terms containing or higher powers should vanish, leaving us with only . This can also be demonstrated by multiplying two dual numbers.

The term is merely the product of the real parts (primal) while the coefficient of is nothing but the primal’s derivative (tangent).

Let’s take the example of a function . To evaluate the derivative at the point (1, 2), we consider consider and . Substituting these into the function, we get

This tells us that the value of the function at () is , derivative with respect to is , derivative with respect to is and the mixed second-order partial derivative is .

For a function that can represent a neural network with inputs and outputs, we can consider a matrix that contains the partial derivatives of all outputs with respect to each input . This matrix of size is known as the Jacobian ().

It tells us how each input influences an output or how sensitive an output is to change in a particular input. In forward-mode AD, each run computes the derivative of all outputs with respect to one particular input. Therefore, the -th column of the Jacobian represents a single forward pass associated with the input and each -th row corresponds to the gradient of one output with respect to all inputs.

As every forward pass builds one column of the Jacobian, it takes time to build the entire matrix. So if we need to get the directional derivative (rate at which the function changes in a given direction at a particular point, basically the projection of the gradient along ) of the function with respect to a vector (known as seed tangent vector), we would need to calculate the Jacobian of the function and multiply it with the given vector.

As we concluded earlier, doing this naively would be quite inefficient. This is exactly what forward mode AD helps us avoid. It calculates the Jacobian-Vector-Product () at the cost of a single forward pass without ever needing to calculate the full Jacobian matrix, which would require extra passes.

The JVP is a column vector (in our case) with rows (matrix product of and ). For a function like a loss function, the JVP is a scalar. It contains information on how the outputs change when the inputs are pushed along the direction of . In short, each component is the directional derivative of an output along . Furthermore, if we choose the seed tangent vector to be the -th unit vector, we get the -th column of the Jacobian that contains the gradients with respect to input . But if we choose a general vector in space, we get a linear combination of columns of the Jacobian. In our example of the evaluation trace, we chose to calculate the partial derivatives with respect to a single input , which aligns with the unit seed tangent vector.

To implement Jacobians in the evaluation trace, we chain the local Jacobians calculated at each primitive as we traverse the graph. A local Jacobian () is the matrix containing the partial derivatives of the local outputs with respect to each local input. The local tangent () is the product of this local Jacobian with the incoming tangent vector . Simply put, it is the directional derivative of the primal when applied in the direction of .

Mathematically, the JVP is the product of the local Jacobians in the direction of v. If is a composition of many functions ,

Since matrix multiplication is associative, AD is equivalent to performing the multiplications step-wise.

To summarize, we start with the seed tangent vector , propagate the tangent vector at each primitive operation and finally end up with the final tangent which is our JVP.

If we consider a function , we can calculate its JVP in the direction of the tangent vector in one single pass as follows.

The value of final tangent () at () is equal to

In practice, AD should compute and carry the primal calculations during the forward pass, producing values at each step. But for a better demonstration of the trace, I instead substituted the values in the final expression. This is mathematically consistent.

Forward mode AD does not suffer from numerical instability issues related with the traditional differentiation techniques and is usually preferred when the number of inputs is far smaller than the number of outputs (). For example, a function would only require one forward pass to obtain the Jacobian. But for neural networks that contain billions of parameters (inputs) and only a small range of outputs (usually just ), we are dealing with the opposite. Enter Reverse Mode AD.

Reverse Mode

Reverse Mode AD is famously known as backpropagation, an algorithm developed in the 20th century that still serves as the backbone of all modern neural networks. Instead of propagating tangents as in forward mode, we propagate adjoints from the output to the input. An adjoint represents the accumulated “sensitivity” of the output with respect to a given primal, computed via the chain rule. So every primal gets paired with an adjoint that tells us how much the function changes when the primal is changed. For a scalar output function , adjoints are simply the partial derivatives of the output with respect to each primal. We will understand this better with an example.

Reverse mode consists of a forward pass and a reverse pass. In the forward pass, we store the primitive operation (and its dependencies) of each primal to form the computational graph. If we take our previous function , we would still have the same primals from our forward pass.

Now let’s see what the backward pass would look like. We start from the scalar output (taking its derivative to be equal to 1 as ) and work our way backwards using the chain rule.

At every node (primal ), we accumulate the gradients of the parent primals by taking the partial derivative of the child value () with respect to each parent and multiplying it by the gradient of the child itself, also called the downstream gradient. In this way, any input primal that contributed to any output primal gets their gradient updated and we eventually reach the last nodes (inputs) to get the derivative of the function with respect to these inputs ( and ). The accumulation (+=) ensures that we are considering the gradient of every child if the primal has multiple children. If you look closely at the last step of the evaluation trace, we do and to update the gradients because we had already finished calculating the initial gradients of all the primals in the fifth step itself.

We know the loss function is a scalar output function . As we discussed in the first section of this article, our optimization algorithm relies on calculating the gradient of this loss function with respect to the parameters (inputs).

This can be achieved by multiplying the gradient of the loss taken with respect to

the model’s output

() with the Jacobian of the loss (), a direct result of

the chain rule. The quantity is sometimes called the error signal and is a

row vector with dimensions ().

This product is a familiar sounding quantity known as the Vector-Jacobian-Product (). Similar to the JVP, reverse mode AD allows us to compute the VJP without the need of a full Jacobian by propagating the adjoints backwards through the graph. A single reverse pass gives us the gradients of the loss function with respect to the parameters needed for our gradient descent. Forward mode AD would require one JVP per parameter to do the same.

A JVP is the adjoint of the VJP. So in principle, we can get the forward pass (JVP) by computing two backward passes. It is like computing the adjoint of the adjoint.

This is exactly why we say adjoints are the “sensitivities” of the function (loss in our case) with respect to the primal (input/output/intermediate variables) as is equivalent to .

In the code below, we can see what a node in the computation graph looks like when written in python-like pseudocode.

class Node:

value -> number,

grad -> number = 0,

back -> function {},

parents -> Node[] = []

constructor(value -> number):

self.value = value

// other methods ...

Each node has a value (primal), its gradient (or adjoint), a list of its parent nodes and the function that is responsible for propagating the gradients backwards. Since this backwards function must be unique for every operation, the node class also contains methods for each supported operation that describes how the backwards function should behave.

For example, the methods for a multiplication operation, a function or a power operation () might look something like this.

mul(other -> Node or number):

if (typeof other is number) other = Node(other)

out = Node(self.value * other.value) // z = xy

out.parents = [self, other]

out.back = function():

self.grad += other.value * out.grad

other.grad += self.value * out.grad

return out

log():

out = Node(ln(self.value))

out.parents = [self] // z = ln(x)

out.back = function():

self.grad += (1 / self.value) * out.grad

return out

pow(other -> number):

out = Node(self.value^other)

out.parents = [self] // z = x^a

out.back = function():

self.grad += other * self.value^(other - 1) * out.grad;

return out

A typical training loop of the network would look like this.

for _ in epochs:

for x, y in training_samples:

forward = mlp.forward(x) // forward pass, gives us model's prediction

loss = (y - forward)^2

mlp.zero_grad() // clear accumulated gradients from previous iteration

loss.backward() // backpropagation

mlp.step() // gradient descent

When we run the forward pass for the first time, the model outputs are random and garbage as the parameters have not been learned. But the outputs get better (and loss smaller) as we perform several iterations over several epochs on the dataset.

In the training loop, we compute the loss of the output of the forward pass (type Node) and clear the gradients

that were accumulated in the previous iteration before starting the backward pass.

The backward function performs a toposort starting from the output to build the

DAG and runs the back function for all nodes sequentially.

backward(finalOp -> Node):

// ...toposort function

order -> Node[] = toposort(finalOp)

finalOp.grad = 1

order.reverse()

for node in order:

node.back()As this calculates the gradients we need for gradient descent, we finally run the step

function in the MLP class to update the parameters.

I have intentionally left out explaining the rest (and most) of the code and jargon as they do not pertain to the intricacies of autodiff and would make this already wordy article unnecessarily long.

Conclusion

My inspiration for this article built while building an autodiff engine for my numerical computation library using Andrej Karpathy’s micrograd as a reference. If you ignore my erroneous (and sadly irrevokable) attempt at sem-versioning my first npm package, you will know that the library is a long way from being production stable. I find myself adding features at a whim because I never had a serious roadmap to begin with. But it has been riveting to play with scientific computing libraries and go neck-deep into the beautiful mathematics. Here are a few useful resources that I read to educate myself.

- What’s Automatic Differentiation?

- Automatic Differentiation in Machine Learning - A Survey

- Lecture 6: Automatic Differentiation - Roger Grosse

- A Gentle Introduction to torch.autograd

As always, thanks for reading!

Finished on a calm, lonely autumn evening